When I started in information security over two decades ago, digital forensics was a highly tactile discipline. We showed up to data centers with hardware write-blockers, pulled physical hard drives out of racks, and imaged them byte-for-byte. The “scene of the crime” was static; the evidence patiently waited for us to arrive.

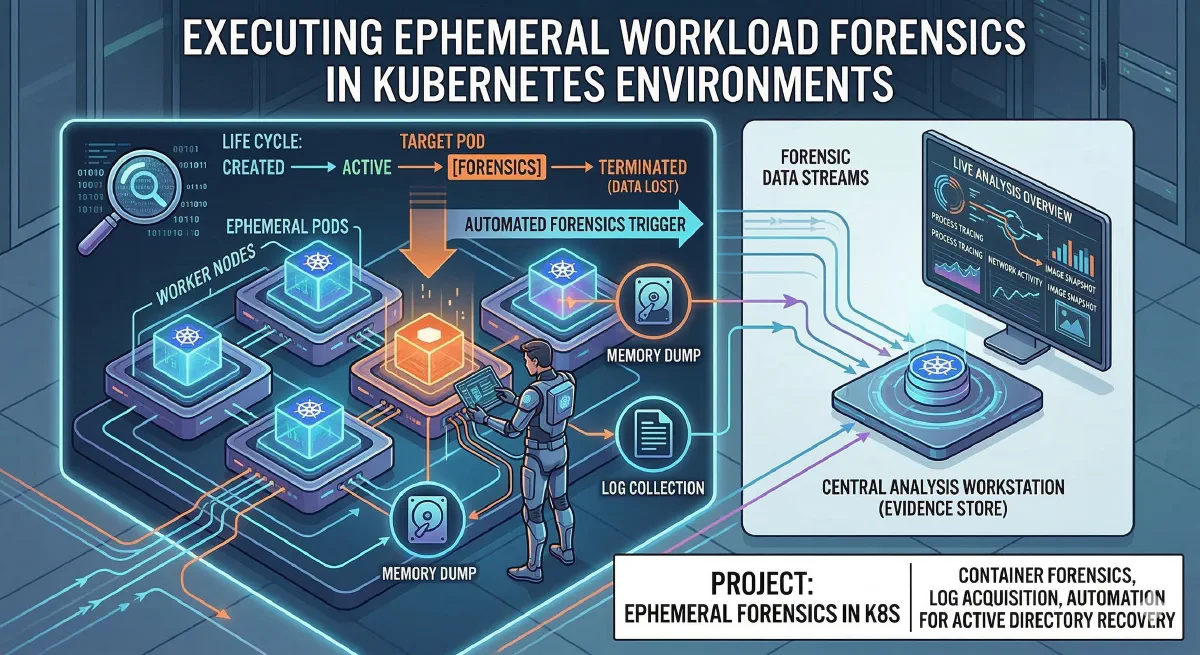

Fast forward to 2026. The modern data center is a sprawling, abstract web of Kubernetes clusters where the scene of the crime—a compromised Pod—might only exist for 45 seconds before a Horizontal Pod Autoscaler (HPA) terminates it.

Traditional Digital Forensics and Incident Response (DFIR) methodologies fundamentally break down in ephemeral environments. If your security operation relies on an analyst receiving an alert, sipping their coffee, and opening a terminal to investigate, you’ve already lost. The malicious process has completed its objective, the container has been killed, and the evidence has vanished into the digital ether.

Executing forensics in Kubernetes requires a paradigm shift: from reactive data recovery to proactive forensic readiness. We must architect our clusters to trap, capture, and preserve evidence in milliseconds, all without tipping off the adversary.

Here is how elite security teams are executing ephemeral workload forensics today.

The Observer Effect: Why Legacy Methods Fail

Historically, when a security operations center (SOC) detected a potentially compromised container, the instinct was to run kubectl exec to poke around, or use kubectl debug to attach an ephemeral container for troubleshooting.

From a forensic standpoint, this is disastrous.

First, interactive sessions pollute the environment, muddying the timeline and altering file system timestamps. Second, modern cloud-native malware is highly aware. Advanced strains monitor for anomalous Tty sessions or unexpected processes spawning in their namespace. The moment they detect an analyst poking around, they wipe their in-memory payloads and self-terminate.

We can no longer afford to “pause” or manually investigate running containers. We need tools that operate entirely out-of-band.

The eBPF Revolution: Deep-Kernel Telemetry

By 2026, extended Berkeley Packet Filter (eBPF) has universally replaced the clunky, high-overhead sidecar agents of the past. For the forensic investigator, eBPF is the ultimate, invisible observer.

Because eBPF programs hook directly into the Linux kernel, they capture telemetry at the system call level. They don’t care what the application developer logged to stdout, nor do they rely on user-space abstractions that malware can easily bypass.

Tools like Cilium Tetragon, Aqua Tracee, and Falco allow us to build a high-fidelity “flight data recorder” for our clusters.

- Process Ancestry: Tetragon provides exact parent-child process trees, allowing us to trace a bizarre outbound network connection back to a heavily obfuscated Python script executed by a vulnerability in a web server.

- Syscall Tracing: Tracee logs low-level syscalls—showing exactly when an attacker unlinked a file to hide it from standard

lscommands or attempted to read a sensitive service account token.

Crucially, because this data is pulled from the kernel space, the attacker has no visibility into the fact that they are being observed.

The Holy Grail: The Kubernetes Checkpointing API

Capturing logs and network flows is critical, but what about memory forensics? How do we capture fileless malware or extract encryption keys from a container that is about to be terminated?

The answer lies in the Kubernetes Forensic Container Checkpointing API, which revolutionized cloud-native DFIR when it matured into a core feature.

Leveraging the underlying Container Runtime Interface (CRI) via containerd or CRI-O, this API allows us to create a stateful, cryptographic snapshot of a running container—capturing its process memory, open file descriptors, and network sockets—without stopping the container.

How It Works in the Field

Instead of risking the observer effect, we issue an API call to the Kubelet hosting the suspicious Pod. The Kubelet silently instructs the runtime to dump the state into an archive file on the underlying node.

The attacker’s processes keep running, completely unaware that a perfect replica of their memory space has just been cloned. We can then use tools like checkpointctl to unpack this archive offline, feeding the raw memory dumps directly into Volatility3 to hunt for injected shellcode, hidden rootkits, or exfiltrated secrets in a secure, isolated sandbox.

Automated Incident Response (AIR): The “Trigger-Capture” Pattern

Because ephemeral workloads disappear so quickly, the moment of capture must be automated. The industry standard workflow for 2026 is the Trigger-Capture-Preserve pattern.

We wire our runtime detection engines (e.g., Falco) into an event-driven automation mesh (like Argo Events or FalcoSidekick). The workflow operates in milliseconds:

- Trigger: Falco detects an unexpected

execvesyscall (e.g.,/bin/bashspawning from an Nginx worker process). - Capture: Before the SOC even gets the alert, the automation mesh instantly calls the Kubernetes Checkpointing API to dump the container’s memory and state.

- Preserve: A DaemonSet running on the node immediately ships the checkpoint archive, along with the preceding 10 minutes of eBPF telemetry, to an immutable storage bucket.

- Quarantine: Instead of terminating the Pod—which alerts the attacker—a dynamic NetworkPolicy (or an Istio service mesh rule) is applied to sever the Pod’s external command-and-control (C2) communication.

The Pod is kept alive but trapped in a digital glass box, allowing analysts to monitor its behavior safely.

Chain of Custody in a Cloud-Native World

As a consultant, I frequently work with organizations dealing with strict regulatory compliance. A massive challenge in ephemeral forensics is proving chain of custody in court. If a container no longer exists, how do you prove the artifacts you pulled from it are authentic?

To solve this, our automated forensic pipelines must inherently support zero-trust evidence handling.

- When a container checkpoint is taken, it must be automatically hashed (SHA-256).

- The archive and its hash must be offloaded directly to WORM (Write-Once-Read-Many) storage, such as AWS S3 with Object Lock or GCP Bucket Lock.

- We use cryptographic signing (via tools like Sigstore/Cosign) to guarantee that the forensic telemetry correlates exactly to the specific container image digest that was running at the time.

Final Thoughts: Architecting for the Inevitable

Executing forensics in Kubernetes environments is no longer an afterthought—it is an architectural mandate. We cannot bolt DFIR onto a cluster after a breach has occurred. The infrastructure must be designed from day one to expect compromise, observe it silently, and trap the evidence before the orchestrator wipes the slate clean.

The days of pulling physical hard drives are long gone. In 2026, the best forensic investigators are part systems architect, part kernel hacker, and part automation engineer. By embracing eBPF telemetry, the Checkpointing API, and automated trigger-capture pipelines, we ensure that no matter how ephemeral the workload, the evidence is always permanent.